In the current landscape of performance marketing, the primary bottleneck is no longer the media buy or the platform algorithm. It is the creative asset. Product teams frequently find themselves trapped in a cycle where the cost of producing high-fidelity visual content exceeds the potential return on a test campaign. This friction often results in teams launching with “good enough” stock photos or waiting weeks for a design queue to clear.

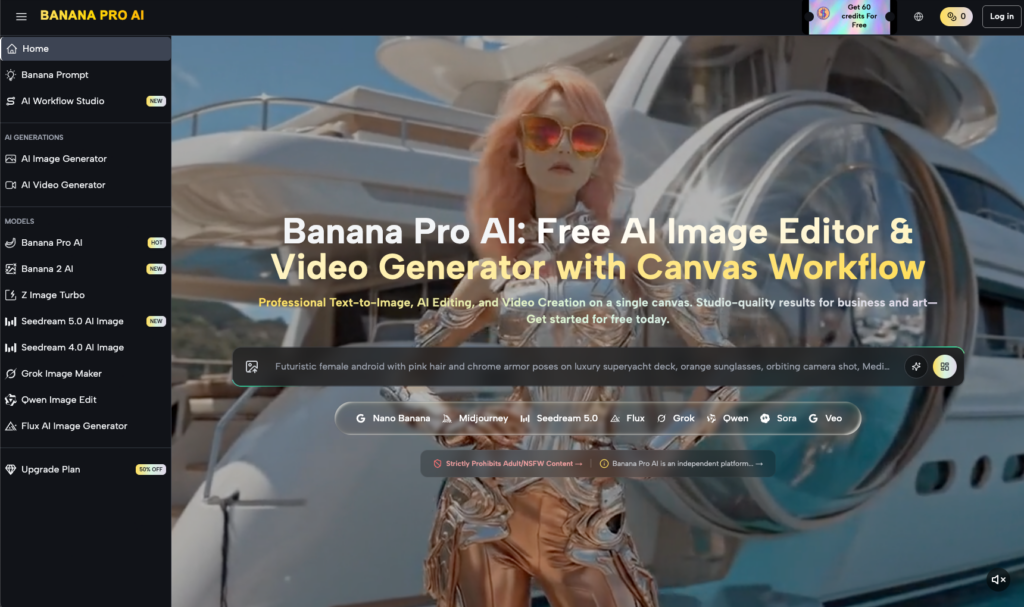

The shift toward generative media has changed the math, but not necessarily the effort. Many early adopters realized that simply having access to a prompt box doesn’t solve the production problem; it just creates a new problem of curation and refinement. Moving from a raw generation to a deployable ad creative requires a specific kind of workflow—one that prioritizes velocity over the traditional, slow-moving pursuit of perfection. Using Nano Banana within a structured production environment allows teams to bridge this gap, treating AI not as a magic wand but as a high-speed modular workbench.

The Death of the Static Creative Pipeline

Traditional creative workflows are linear. You brief a designer, they produce a concept, revisions occur, and finally, a set of static assets is exported. If an ad fails, the loop starts over. In a high-velocity environment, this is a recipe for stagnation. When we look at how agile teams are using Banana AI, we see a move toward a non-linear, “composable” approach.

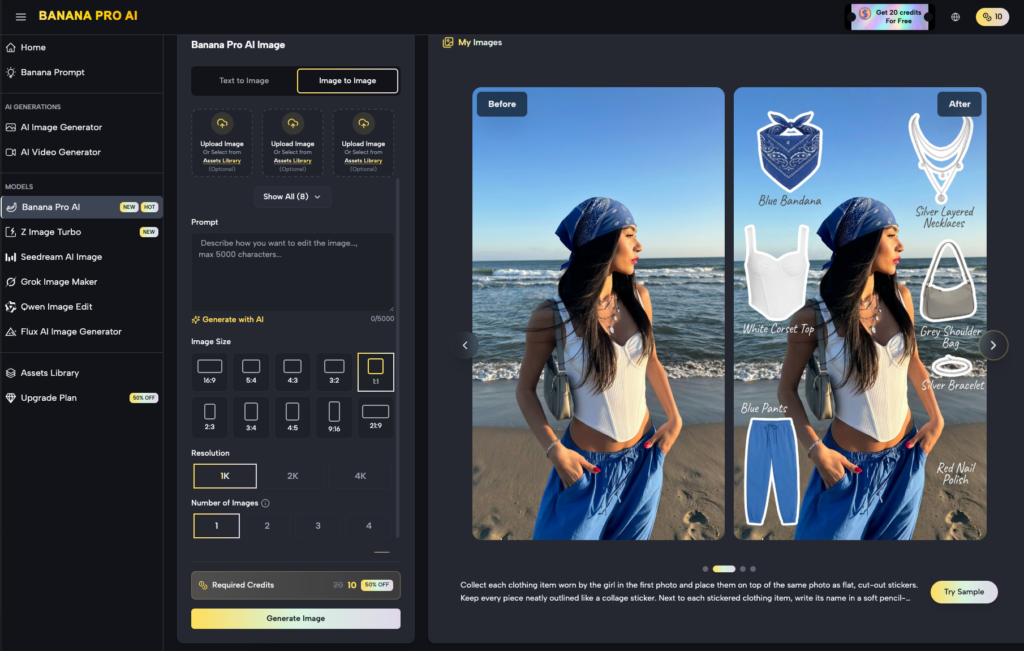

Instead of commissioning a single hero image, teams are using these tools to generate “components” of a creative. They might generate a background, a specific lighting mood, and a product placement scenario separately. By breaking the asset down, the risk of a “failed” generation is minimized. If the background is perfect but the product looks slightly off, you don’t scrap the whole thing. You move it into the canvas and iterate on the specific problematic layer.

Leveraging the AI Image Editor for Precision

One of the most common frustrations with early-stage generative tools was the lack of granular control. You could ask for a “blue sky,” and you might get a “stormy ocean.” For a brand manager, this randomness is a dealbreaker. This is where the AI Image Editor becomes the primary tool for the operator.

Rather than relying on the “pray-and-prompt” method, sophisticated users utilize the image-to-image capabilities to maintain structural integrity. If you have a rough sketch or a low-fidelity photo of a prototype, the AI Image Editor can be used to “skin” that wireframe with realistic textures, shadows, and environmental lighting. It preserves the composition while upgrading the aesthetic quality.

However, we must be realistic about the current state of the technology. Even with advanced tools, the AI Image Editor still struggles with certain spatial relationships. If you are trying to place a product inside a glass jar with specific refractive properties, the AI will likely hallucinate the edges. In these moments, the “velocity over perfection” mindset is critical: is the hallucination visible at the scale of a mobile ad? If not, the asset is ready to ship.

Scaling Iterations with Nano Banana Pro

When a product enters its launch phase, the need for volume scales exponentially. You need assets for Instagram Stories, square posts for the feed, widescreen banners for display ads, and perhaps a 15-second motion clip for TikTok. Manually resizing and re-designing these for every platform is a waste of human capital.

By utilizing Nano Banana Pro, marketers can maintain a unified visual language across multiple formats. The workflow involves setting a core “seed” or reference image and then using the model’s understanding of that style to generate variations.

This isn’t just about changing aspect ratios. It’s about changing the narrative context. One set of ads might feature the product in a minimalist home office, while another version—generated in minutes—places it in a high-energy outdoor setting. The core product remains the focal point, but the “vibe” is swapped to test which audience segment responds better. This level of rapid A/B testing was previously only available to brands with massive creative budgets.

The Transition from Static to Motion

The real frontier for product teams today is video. Static images are increasingly ignored in high-density feeds. The challenge is that AI video generation is notoriously difficult to control. It is prone to “morphing” and inconsistent frame-to-frame details.

The most effective use case for Banana Pro in the video space isn’t trying to create a 30-second cinematic commercial. Instead, teams are using it to create “micro-moments”—the subtle movement of steam off a coffee cup, the shifting shadows on a metallic surface, or a slow zoom into a high-detail texture. These 3-to-5 second clips are then layered with typography and UI elements in a traditional editor.

This hybrid approach acknowledges a hard truth: full AI video is not yet ready for high-stakes brand storytelling where every pixel must be perfect. However, for “scroll-stoppers” in social feeds, the slight “dreamy” quality of AI motion can actually be an advantage, drawing the eye precisely because it doesn’t look like standard videography.

The Reality of Consistency and Brand Safety

One major limitation that every operator must account for is “prompt drift.” Even within a sophisticated suite like Banana AI, the model doesn’t “know” your brand guidelines. It doesn’t know that your specific shade of brand blue is #0047AB and not #0056D2.

If you rely solely on text prompts, your creative output will eventually lose its visual cohesion. The solution used by professional content teams is to use “ControlNets” or specific reference images as anchors. By feeding the tool a brand-approved color palette and a specific lighting reference, you force the AI to stay within the lanes.

There is also the issue of text. Most generative models still treat text as a visual pattern rather than a linguistic instruction. If you need your product name to appear perfectly on a label, do not expect the AI to handle it. The most efficient workflow is to generate the “unlabeled” version of the product and then use a traditional design tool to overlay the vector logo. Trying to force the AI to get the typography right is a significant time-sink that contradicts the goal of velocity.

Managing the Canvas Workflow

The “Canvas” approach is perhaps the most significant shift in how AI assets are built. In a traditional generation, you get a flat file. In a canvas workflow, you are working in a space where elements can be expanded, outpainted, or selectively replaced.

Imagine you have a great shot of a product, but the frame is too tight for a YouTube header. Using “Outpainting” features within the Nano Banana ecosystem, the operator can extend the environment beyond the original borders of the image. The AI looks at the existing textures—the grain of the wood, the bokeh of the background—and extrapolates them outward.

This is where “practical judgment” outweighs “prompting skill.” An experienced operator knows when to stop. Outpainting too far often leads to the AI introducing weird artifacts or repeating patterns. The goal isn’t to create a 10,000-pixel landscape; the goal is to get enough “bleed” so the graphic designer has room to place a “Buy Now” button without obscuring the product.

Data-Driven Creative Refinement

The true power of integrating Nano Banana Pro into a marketing stack is the ability to close the loop between performance data and creative production.

If the data shows that users are clicking on ads with “warm lighting” at a 20% higher rate than “cool lighting,” the creative team doesn’t need to reshoot the product. They can take the winning compositions and use the AI Image Editor to “re-light” them. You are essentially using the AI as a post-production filter that can fundamentally change the mood of the asset without changing its core content.

This creates a “living” creative library. Instead of assets being “final” once they are exported, they are merely “versions” that can be evolved based on how the market reacts. This is the definition of a tool-savvy workflow: using technology to remove the finality of the design process, allowing for constant, low-cost evolution.

Addressing the “Uncanny Valley” in Product Assets

We must be cautious about over-relying on AI for certain types of lifestyle content. While the technology is incredible at landscapes, textures, and inanimate objects, it still hits walls when depicting complex human interactions—specifically hands, eyes, and specific athletic movements.

If your ad creative relies on a person holding your product, the “uncanny valley” effect can actually hurt your brand trust. Consumers are becoming increasingly sensitive to AI-generated humans. A product team that ignores this risk in the name of “velocity” may find their conversion rates dropping because the ad feels “fake” or “dishonest.”

The workaround? Use AI for the environment, the lighting, and the abstract elements, but keep the human elements authentic. Compositing a real photo of a hand onto an AI-generated background is often faster and more effective than trying to prompt the AI into getting the anatomy correct.

Conclusion: Re-Centering the Human Operator

The goal of using Nano Banana and the wider Banana Pro suite isn’t to replace the creative director; it’s to liberate them from the mundane aspects of asset production. When you can generate 50 variations of a background in the time it takes to brew a pot of coffee, your job shifts from “maker” to “curator.”

By embracing a “Velocity Over Perfection” mindset, product teams can ship more, test more, and ultimately find the “winning” creative faster. The limitations of the technology—the occasional hallucination, the struggle with text, the jitter in video—are not dealbreakers. They are simply the boundaries within which a smart operator works.

In the end, the brands that win aren’t those with the most perfect AI prompts, but those who can turn a “good” idea into a “shippable” asset in minutes rather than days. The future of launch assets is modular, iterative, and incredibly fast.